Tool to compress / decompress with Huffman coding. Huffman coding is a data compression algorithm (lossless) which use a binary tree and a variable length code based on probability of appearance.

Huffman Coding - dCode

Tag(s) : Compression

dCode is free and its tools are a valuable help in games, maths, geocaching, puzzles and problems to solve every day!

A suggestion ? a feedback ? a bug ? an idea ? Write to dCode!

Huffman Coding

Huffman Decoder

Huffman Coding Generator

Answers to Questions (FAQ)

What is Huffman coding? (Definition)

Huffman coding is a lossless data compression technique based on the probabilities of symbol occurrence in a message.

It assigns each symbol a binary word of varying length, so that the most frequent symbols receive the shortest codes.

How to encrypt using Huffman Coding cipher?

Huffman coding begins by calculating the frequency of each symbol in the message and sorting the characters from most frequent to least frequent.

The message DCODEMESSAGE contains the letter E three times, the letters D and S twice, and the letters A, C, G, M, and O once each.

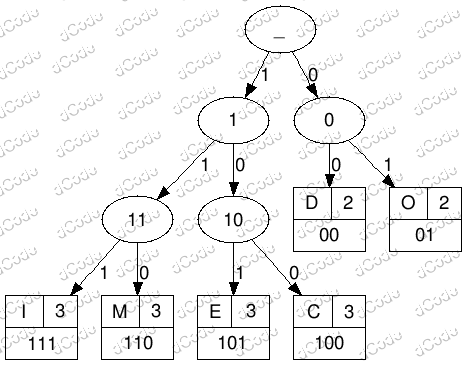

The Huffman algorithm creates a tree whose leaves are the found letters and whose values (or weights) are their number of occurrences in the message.

1 - Assign each symbol a node weighted by its frequency.

2 - Select the two nodes with the lowest weights.

3 - Merge them into a new node whose weight is the sum of the two.

4 - Repeat steps 2 and 3 until a single root node is obtained.

The binary code of a character is obtained by traversing the tree from the root to the leaf and noting the path (0 or 1) at each node.

The set of character-binary associations constitutes the dictionary.

Example: DCODEMOI generates a tree where D and the O, present most often, will have a short code. 'D = 00', 'O = 01', 'I = 111', 'M = 110', 'E = 101', 'C = 100', so 00100010010111001111 (20 bits)

The dictionary/codebook is inseparable from the message, without it, the message cannot be decoded.

How to decrypt Huffman Code?

Decoding the Huffman coding requires knowledge of the lookup tree or dictionary (characters ↔ binary codes).

1 - Read the bits one by one and traverse the tree from the root, following the bit path (0 or 1)

2 - As soon as a leaf is reached, display the corresponding symbol

3 - Repeat step 1

Example: Decode the message 00100010010111001111 with the dictionary described above. To research for 0 gives no correspondence, then continue with 00 which is code of the letter D, then 1 (does not exist), then 10 (does not exist), then 100 (code for C), etc.

The plain message is' DCODEMOI'

How to choose between left and right when constructing the Huffman tree?

When two nodes have the same weight in the construction of the Huffman tree, the choice of placing one on the left or right is arbitrary.

This choice has no impact on encoding performance: the average length remains the same, and the code's optimality is maintained.

However, this choice does modify the resulting binary codes: swapping left and right is equivalent to exchanging the 0 and 1 bits on a branch; the codes change, but their length remains the same.

In some implementations, additional rules are used to make the result deterministic (eg. using the lexicographic order of symbols), ensuring that the same dictionary is always obtained for the same message.

Why is Huffman used for compression?

Huffman coding is used because it reduces the average data size by exploiting symbol frequencies.

The average code length is close to the theoretical limit given by Shannon entropy.

The gain depends strongly on the symbol distribution: it is high if certain values are very frequent but low if the frequencies are uniform.

Note that for short messages, the storage cost of the tree or dictionary can reduce, or even negate, the compression gain.

How to recognize Huffman coded text?

The encoded message is in binary format (or in a hexadecimal representation) and must be accompanied by a correspondence tree/dictionary table for decryption.

The presence of variable length codes is an important feature.

The notions of a tree, tree structure or pruning (technique aiming to optimize the tree by dynamically removing branches/nodes) are clues.

How to decipher Huffman coding without the tree?

Without a tree or dictionary, decoding a Huffman message is generally impossible. This is because several different prefix codes can produce compatible binary sequences.

By making assumptions about the length of the message and the size of the binary words, it is possible to search for the probable list of words used by Huffman.

It should then be associated with the right letters, which represents a second difficulty for decryption and certainly requires automatic methods.

What are the variants of the Huffman cipher?

There are variants of Huffman when creating the tree / dictionary.

The dictionary can be static: each character / byte has a predefined code and is known or published in advance (so it does not need to be transmitted)

The dictionary can be semi-adaptive: the content is analyzed to calculate the frequency of each character and an optimized tree is used for encoding (it must then be transmitted for decoding). This is the version implemented on dCode

The dictionary can be adaptive: from a known tree (published before and therefore not transmitted) it is modified during compression and optimized as and when. The calculation time is much longer but often offers a better compression ratio.

Morse code uses variable length codes similar to Huffman coding.

When was Huffman coding invented?

The Huffman coding was published in 1952 by David Albert Huffman.

Source code

dCode retains ownership of the "Huffman Coding" source code. Any algorithm for the "Huffman Coding" algorithm, applet or snippet or script (converter, solver, encryption / decryption, encoding / decoding, ciphering / deciphering, breaker, translator), or any "Huffman Coding" functions (calculate, convert, solve, decrypt / encrypt, decipher / cipher, decode / encode, translate) written in any informatic language (Python, Java, PHP, C#, Javascript, Matlab, etc.) or any database download or API access for "Huffman Coding" or any other element are not public (except explicit open source licence). Same with the download for offline use on PC, mobile, tablet, iPhone or Android app.

Reminder: dCode is an educational and teaching resource, accessible online for free and for everyone.

Cite dCode

The content of the page "Huffman Coding" and its results may be freely copied and reused, including for commercial purposes, provided that dCode.fr is cited as the source (Creative Commons CC-BY free distribution license).

Exporting the results is free and can be done simply by clicking on the export icons ⤓ (.csv or .txt format) or ⧉ (copy and paste).

To cite dCode.fr on another website, use the link:

In a scientific article or book, the recommended bibliographic citation is: Huffman Coding on dCode.fr [online website], retrieved on 2026-05-14,

- Huffman Decoder

- Huffman Coding Generator

- What is Huffman coding? (Definition)

- How to encrypt using Huffman Coding cipher?

- How to decrypt Huffman Code?

- How to choose between left and right when constructing the Huffman tree?

- Why is Huffman used for compression?

- How to recognize Huffman coded text?

- How to decipher Huffman coding without the tree?

- What are the variants of the Huffman cipher?

- When was Huffman coding invented?